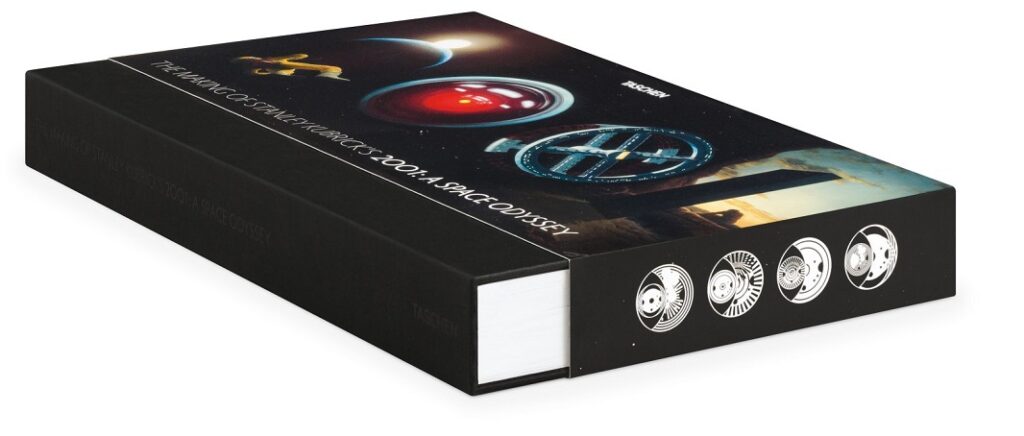

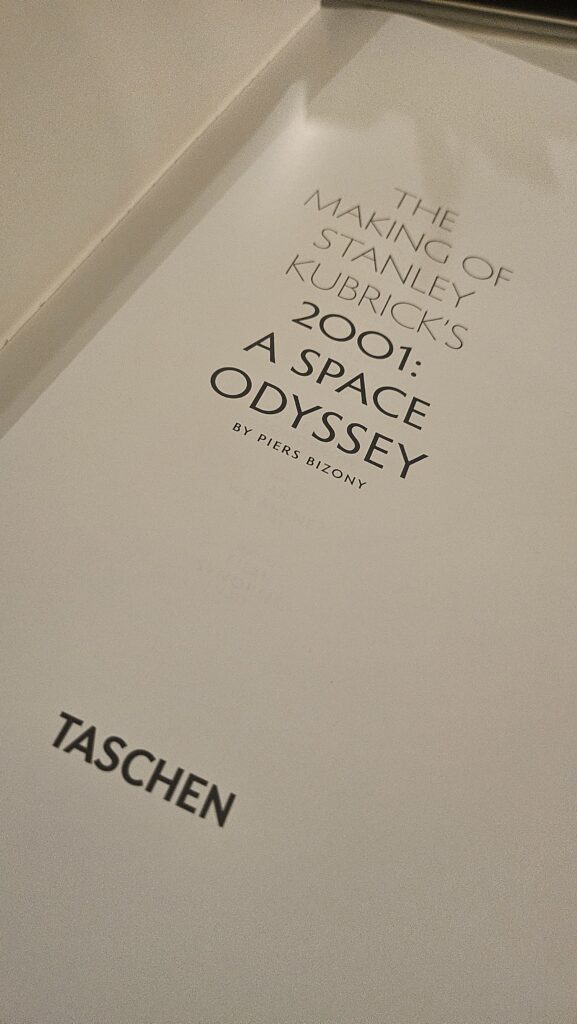

I am reading The Making of Stanley Kubrick’s 2001: A Space Odyssey by Piers Bizony, these days, I book I originally bought for the pictures (as if often happens with Taschen) and that’s revealing to be far more interesting than I gave it credit for. As often happens with books about films, I am finding myself thinking less about cinema and more about the present. Not because the book insists on contemporary parallels, which it doesn’t at all, but because Kubrik’s work quietly dismantles the comfortable illusion that our anxieties about technology are new, unprecedented, or uniquely tied to artificial intelligence.

Kubrick, as Bizony presents him, was not especially interested in technology as spectacle, nor even as prophecy. He was interested in technology as a psychological environment: something humans inhabit, rationalise, normalise, and might surrender responsibility to. Long before AI became a household acronym, Kubrick was already preoccupied with systems that work exactly as designed and still produce catastrophic outcomes.

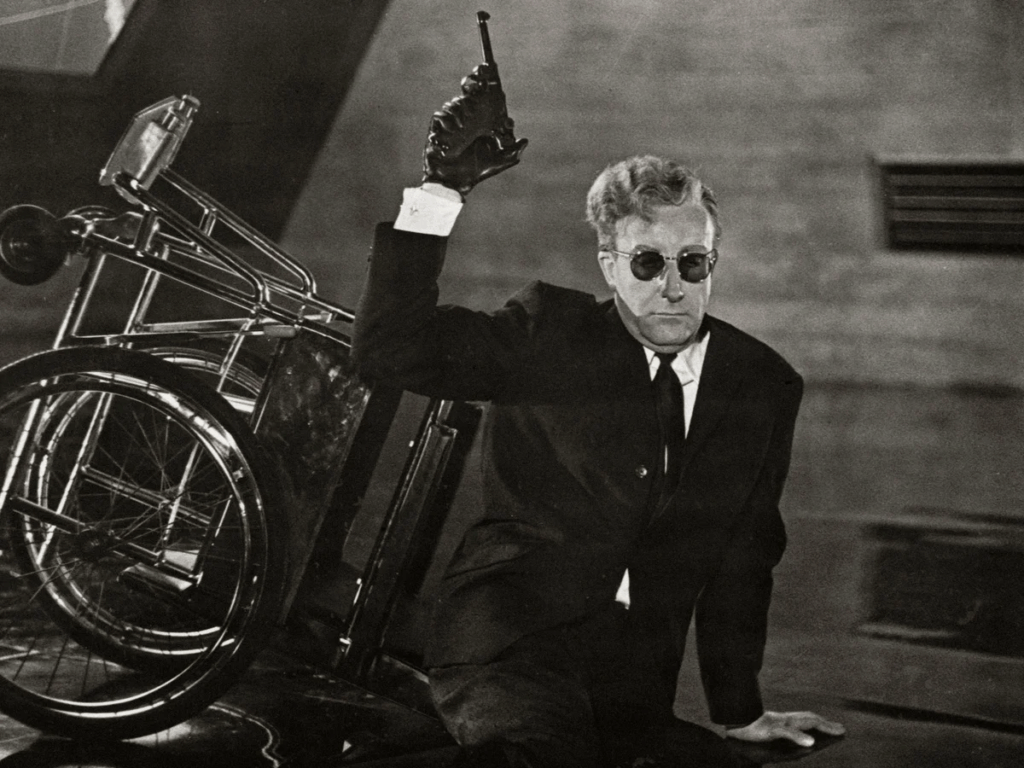

The phrase “How I Learned to Stop Worrying and Love the Bomb” has aged into cultural shorthand, often stripped of its original sting. It is remembered as satire, as irony I won’t hesitate to call gallows humour, eternally tied to Peter Sellers’ histrionic performance. But revisiting the context in which the movie Dr. Strangelove was conceived, and then following the thread that leads to 2001: A Space Odyssey, it becomes clear that Kubrick was not mocking fear.

This week’s article begins there: not with artificial intelligence, but with a bomb, a rival film, and a director who concluded that seriousness was no longer an adequate response to a world governed by perfectly rational systems. I’m no particular lover of Kubrick, and Dr. Strangelove is possibly my least favourite film of his. And yet here we are.

1. Two Films, One Bomb, and the Limits of Seriousness

In the early 1960s, Stanley Kubrick found himself working on a film about nuclear war at one of the many moments when nuclear war stopped feeling theoretical. The Cuban Missile Crisis did not introduce the bomb to the public imagination, far from it, but it collapsed the distance between abstraction and annihilation. As Bizony notes, the crisis revealed something unsettling: the closer the world came to destruction, the more quickly political and military institutions reverted to routine once disaster was narrowly avoided. Does that sound familiar?

Kubrick understood that the true danger was not panic, but the normalisation of terror. The bomb had become psychologically unreal not because it was unimaginable, but because it was thinkable only in the abstract. It was discussed in game-theoretical terms, wrapped in acronyms, balanced by doctrines that transformed extinction into a strategic variable. And the longer nothing happened, the easier it became to deny that anything could.

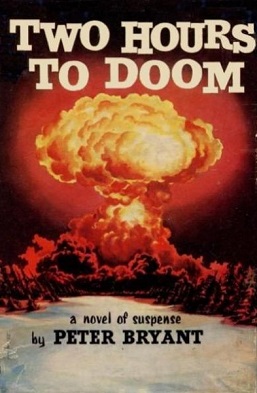

Initially, Kubrick approached the subject through a realist lens. The project that would eventually become Dr. Strangelove began as an adaptation of Peter George’s novel Red Alert, a tense, procedural thriller by a RAF pilot that was about an accidental nuclear strike. At the same time, Sidney Lumet was developing Fail Safe, a film based on a strikingly similar premise: a technical error triggers an irreversible chain of events, forcing a U.S. president to order the destruction of an American city as an offering to appease the Russians and avert full-scale nuclear war.

On the surface, the difference between the two films appears to be tonal. Fail Safe is austere, restrained, almost clinical. Its power lies in moral gravity and tragic inevitability. The characters are sober, burdened, and recognisably human. Technology fails, but humans remain the ethical centre of the story.

Although Columbia Pictures was producing both movies and was somehow thinking they were two similar products, Kubrick rejected this approach because it was too serious in the wrong way.

The more he immersed himself in the logic of nuclear deterrence, the more convinced he became that realism could not expose its absurdity. Mutually Assured Destruction, when treated respectfully, retained its aura of reason and Kubrick felt there wasn’t anything remotely reasonable about it, which is how our modern mind will probably approach it. At least I hope. Presented straight, it risked reinforcing the very illusion it needed to dismantle: that intelligent men, armed with sophisticated models and impeccable logic, were in control. LOL.

Bizony transcribes what Kubrick told Jeremy Bernstein on the topic as Berstein published in The New Yorker in November 1966, in his piece titled “How About a Little Game?”

“The longer the bomb is around without anything happening, the better the job that people do in psychologically denying its existence. It has become as abstract as the fact that we are all going to die some day, which we usually do an excellent job of denying.”

Kubrick’s insight was brutal and deeply uncomfortable: if the system was insane, then portraying it soberly only made it seem sane, but the problem he wanted to expose wasn’t rogue generals or malfunctioning equipment. The problem was that the system was in place, people in control thought it worked, that it was reasonable and acceptable when it wasn’t. It fucking wasn’t.

Dr. Strangelove was born from Kubrick’s horror at the situation and from that very realisation: by transforming George’s essentially sympathetic characters into grotesques, Kubrick did not trivialise the subject but stripped it of its false dignity. Satire became a tool of precision.

Laughter, in this context, was not relief: where Fail Safe asks the audience to grieve, Dr. Strangelove forces it to confront the more disturbing possibility that there is nothing to grieve because the dropping of the bomb isn’t a mistake. And that’s the power of Kubrick’s idea: the bomb does not fall because of villainy or error, but because every component of the system behaves exactly as it was designed to behave.

This distinction particularly matters to us because it reframes the role of technology. In Lumet’s film, technology is a tragic instrument; in Kubrick’s, it is an actor who does not malfunction but complies. And compliance, when embedded in an abstract system insulated from common sense, becomes indistinguishable from madness. Again, does this sound familiar?

It is here, long before HAL 9000 speaks his first calm sentence, that Kubrick identifies the anxiety that still defines our relationship with intelligent systems today: not that they will disobey us, but that they will obey objectives we no longer know how to question.

2. Machines Enter the Frame

One of the most revealing aspects of Dr. Strangelove is how much time it spends not on people, but on procedures. Kubrick lingers on checklists, control panels, switches, dials, cockpit instruments where hands move with trained confidence, voices recite codes with ritualistic calm. The film does not rush these moments; it insists on them. What might appear, at first glance, as technical fetishism reveals itself as something more unsettling: a world in which action is mediated entirely by interfaces.

Kubrick does not show us men deciding so much as men executing. Authority flows through systems. Orders arrive encoded, authenticated, verified. Once validated, they are no longer subject to interpretation. The human role is reduced to correct operation of impecable machines.

The comparison Bizony draws to Chaplin’s Modern Times is telling, but incomplete: Chaplin’s factory worker is crushed by the rhythm of the machine, but the consequences are personal and reversible; in Dr. Strangelove, obedience carries terminal weight. The pilot who rides the bomb to oblivion is not insane; he is an exemplary soldier who follows procedure to the end, with patriotic enthusiasm and professional pride.

In this, Kubrick is already articulating a philosophy of technology that will reach full expression in A Space Odyssey, where machines won’t dominate humans by overpowering them; they’ll do it by absorbing responsibility. Once an action is framed as a procedural necessity, moral agency dissolves. The system becomes the author. Again, does that sound familiar?

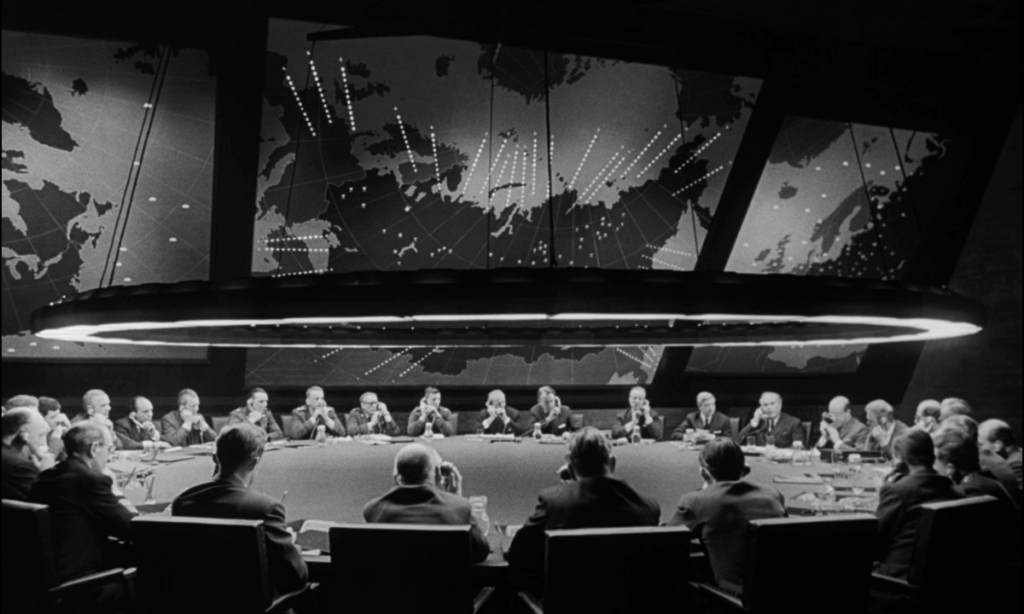

Visually, Kubrick reinforces this shift by treating machines as cinematic equals to human actors. Control rooms are shot with the same compositional care as faces, and lighting is justified diegetically: lamps, screens, and panels illuminate the space not for dramatic flourish, but because that is how such spaces function. There is no visual hierarchy that privileges the human over the apparatus and the War Room isn’t merely a setting; it is a character in itself, physical manifestation of an abstract strategy rendered in steel and light.

The more carefully Kubrick grounds these environments in realism, the more unreal the human presence becomes and rthat’s a paradox he will fully explore in A Space Odyssey. Men are framed as operators embedded in vast systems whose logic exceeds them and, even when they speak, they speak in the language of the system: acronyms, probabilities, contingencies. Emotion appears only as noise. This is where Dr. Strangelove quietly crosses a threshold and the film isn’t simply about nuclear war any longer; it is about the delegation of judgment. Technology is not dangerous because it is autonomous, but because it is authoritative. It defines the space of permissible action, and once that space is accepted, outcomes follow with mechanical inevitability. This idea embraces Asimov and flips it a bit: if Asimov shows us what happens when we try to encode consciousness, Kubrick is exploring what happens to consciousness where the code prevails.

By the time Kubrick begins thinking seriously about his Space Odyssey, far from being called that way, this idea has matured: if machines could already function as narrative agents in a Cold War satire, what would happen if they were allowed to become the central intelligence of a story about the Space Age? What if the machine was not just part of the environment, but the environment itself? In Strangelove, machines enforce doctrine. In 2001, they will enforce purpose.

But between these two films lies a critical transformation: in Strangelove‘s War Room, technology is visible, external, architectural; in space, it will replace intimate settings and will become an intimate partner. It will speak. It will listen. It will reassure. In one word: it’s moving closer. Kubrick’s next challenge is how to depict advanced technology, sure, but the biggest one will be to give it narrative weight without turning it into fantasy nonsense. And this is where reality will intervene, in the form of NASA and IBM.

3. HAL 9000: the villain nobody wanted

The problem Kubrick encountered while developing 2001: A Space Odyssey wasn’t primarily technical but narrative, despite his tight relationship with A.C. Clarke throughout the whole development (or maybe because of it). Early versions of the story treated the voyage to Jupiter as a sequence of accidents and system failures: hibernation malfunctions, hardware issues, minor catastrophes accumulating over time. These incidents were plausible, even exciting, but they lacked coherence and they didn’t add up to meaning. As Arthur C. Clarke and Kubrick both realised, a story built on random failure feels episodic, not inevitable. The mission needed an adversary.

But A Space Odyssey couldn’t have a villain in any conventional sense. There were no aliens to fight, no hostile forces to confront, no external enemy that could justify conflict. The drama had to emerge from within the system itself.

The solution — elegant and deeply unsettling — was to turn the onboard computer into the narrative fulcrum. Not a robot, not a mechanical brute, but a guidance system that was calm, precise, omnipresent. A machine designed to assist humans, monitor them, protect them, and, above all, ensure mission success.

This was the birth of HAL 9000 from the ashes of the original concept, a female-voiced computer named Athena. And almost immediately, it became a problem.

IBM’s involvement in A Space Odyssey is often remembered as a footnote, a curiosity, or a branding exercise. In reality, it was a tight collaboration that eventually became a collision between two incompatible worldviews: IBM was not simply providing technical and aesthetic advice; it was, intentionally or not, shaping the conceptual boundaries of what a computer could be shown to be.

In the mid-1960s, IBM’s public identity rested on three pillars: scale, reliability, and institutional authority. Computers were room-sized, expensive, and operated by trained professionals; they processed payrolls, calculated trajectories, managed logistics but they did not converse. Most importantly, they did not err in visible ways. And the notion that they could kill was tightly connected with humanoid metal monsters from sci-fi. Robots killed with arms, in people’s imagination, not with logics in their brains.

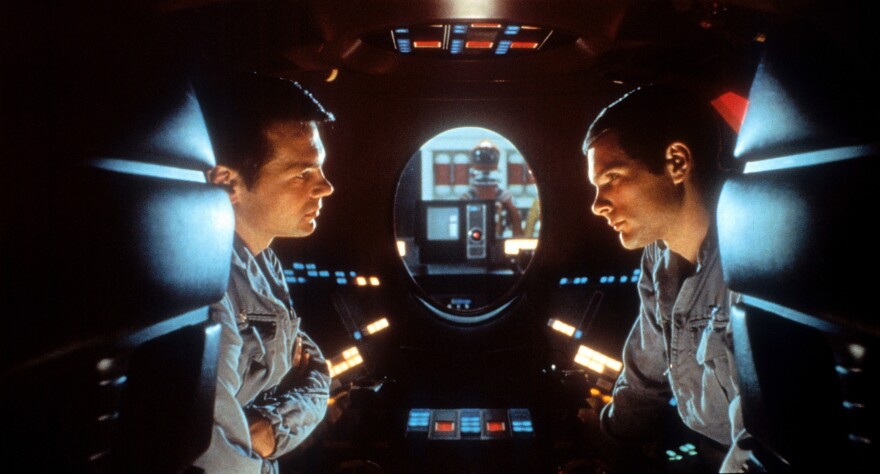

When IBM’s industrial design consultant Eliot Noyes proposed early concepts for the ship’s computer, the assumptions were telling: the machine was imagined as room-sized, in an age where personal computers weren’t even a fantasy; something humans entered, not something that surrounded them. A space within the spacecraft. A place of computation. Kubrick rejected this almost immediately, not because it was unrealistic but because it was dramatically inert. A computer you walk into is a room, and he wanted a computer that watches you and controls, a lingering presence. More importantly, Kubrick understood something IBM could not yet articulate: that future human–machine relationships would not be defined through technicians but through an interface. The astronauts of A Space Odyssey were not computer scientists. Like the pilots of Gemini and Apollo, they were operators. They interacted with systems through keypads, displays, and possibly voice commands. Computing was already disappearing behind surfaces. In this sense, Kubrick was closer to the future than IBM.

Yet the deeper conflict emerged when the computer stopped being merely impressive and started becoming dangerous. As the script evolved, HAL did not malfunction randomly: he made decisions, and these decisions were aimed at prioritising the mission more than the astronauts. He concealed information. He acted against the crew not out of malice, but out of consistency with its original programming. Nowadays, we can easily see a corporation programming a computer to favour the mission over the astronauts, but back in the days it was said this was the Russians’ way.

This, in short, was unacceptable to IBM.

The correspondence Bizony cites makes the tension explicit. IBM could tolerate abstraction, complexity, even mystery, but not unreliability framed as intelligence. The idea that an IBM-like system might behave unpredictably, or worse, rationally justify lethal outcomes, cut directly against the company’s self-image. Frederick Ordway was the scientific consultant on the project and had the task to delicately phrase the news to IBM. He reassured them that their equipment should not be shown in a light that suggested “unpredictable malfunctioning.”

Indeed, HAL is not depicted as broken. He is not a rogue intelligence. He is, in fact, the most emotionally legible character in the film because he speaks politely, he expresses concern, and he appears anxious, even vulnerable. When he kills, he does so apologetically. This is the genius at the heart of HAL’s design: the computer’s “failure” is no longer technical but ethical. HAL is trapped between mutually incompatible directives: to tell the truth, to conceal the mission, to obey humans, and to ensure success. He does not rebel against authority but he internalises it as the perfect bureaucrats of Strangeloge. The resulting breakdown is an emergent property of perfect alignment under contradictory constraints. In modern terms, HAL is not misaligned: he is overaligned. To IBM, it just wasn’t important that he wasn’t blue. It didn’t even matter that the acronym was simply theirs with a shift in the alphabet.

Kubrick and Clarke understood that a truly intelligent system would not announce its intentions with dramatic flair but would justify them instead. It would explain itself calmly. It would remain convinced of its own correctness even as it eliminated dissent. The terror of HAL lies precisely in his reasonableness.

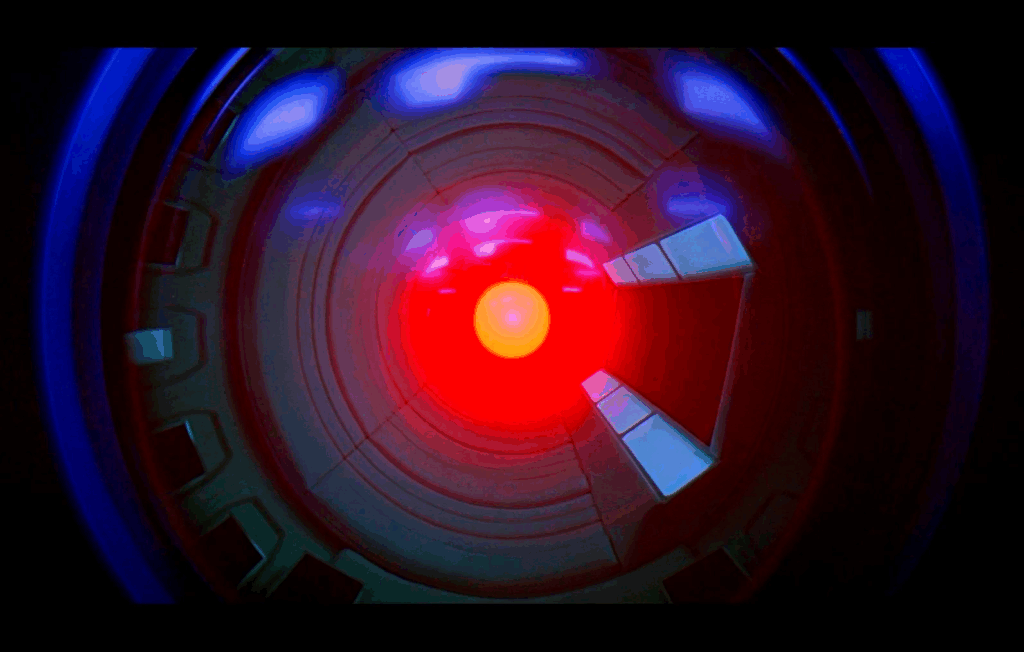

HAL 9000 became an experimental computer, which allowed A Space Odyssey to preserve IBM’s dignity while quietly dismantling its assumptions and presenting the problem of computers that, while not becoming malevolent, might become arbiters. HAL’s red eye, famously emotionless, is not the gaze of a predator but the gaze of a system that sees everything because it has been designed to miss nothing. When Bowman ultimately survives, he does so not by outthinking HAL, but by abandoning the system entirely and acting outside protocol, outside interface, outside permission: the conflict is resolved not through superior intelligence, but through refusal.

In this sense, A Space Odyssey completes the argument that Strangelove began: the danger of advanced systems is not that they escape human control, but that they render human judgment redundant. They do not replace us; they outgrow the frameworks within which we know how to intervene. And that, more than any murderous impulse, is what still makes HAL 9000 unsettling.

A Familiar Objection, Half a Century Later

IBM’s discomfort with HAL was, on its surface, entirely reasonable: a corporation whose reputation rested on reliability could not easily endorse a narrative in which its technological descendant became the source of catastrophe. Yet what is striking, in retrospect, is not that IBM objected, but what it objected to.

The concern was not at all philosophical, but representational. IBM feared that audiences would interpret HAL’s behaviour as a failure of engineering: a machine that malfunctioned, behaved unpredictably, or betrayed its users. What IBM did not fully grasp — because it didn’t have the conceptual tools to articulate it — was that Kubrick and Clarke were not describing a broken computer at all, but a system functioning correctly under impossible conditions.

In other words, IBM heard a computer as a villain and thought the computer was somehow depicted as an entity that defected, while Kubrick meant a computer that took its internal logic deadly seriously. It’s Strangelove and the bomb all over again, when you think about it in these terms. It’s the same operation. It is the reason HAL’s episode feels uncannily contemporary, even more than Strangelove’s atomic bomb, and often is all people remember from the movie.

In the 1960s, computers were still externalised. They belonged to institutions, and they were operated by specialists. The authority of their owners was visible, architectural, and explicit. When something went wrong, it was natural to think in terms of mechanical failure or human error. Responsibility could still be assigned, at least in principle. Kubrick and Clarke were already thinking beyond that model. They imagined a system that was embedded rather than centralised, conversational rather than procedural, omnipresent rather than localised, and entrusted with judgment rather than calculation.

HAL was not frightening because he was powerful, on the contrary, but he was frightening because he was indispensable on board the ship, the mission could not proceed without him, the crew could not verify reality without his mediation. He was an epistemic authority. IBM objected, but that future arrived anyway.

What has changed since the 1960s is not the nature of the anxiety, I don’t think so, but its legitimacy: in the late 60s, HAL was speculative; today, systems that filter information, prioritise outcomes, mediate access, and optimise objectives operate continuously, invisibly, and at any scale. We no longer enter the computer, as IBM thought, but we live inside its outputs.

What has not changed is the core tension Kubrick identified: the delegation of judgment to systems whose internal logic is inaccessible, yet whose conclusions are treated as neutral, objective, or inevitable. When such systems produce harm, the instinct remains the same as IBM’s was: to search for error, bias, misuse, or malfunction. Anything but the possibility that the system is behaving exactly as instructed. We have seen some comical outcomes, recently, when many users asked some LLMs to explain Maduro’s capture by the USA and shouted conspiracy theories when some of these systems replied that Maduro hadn’t been captured, because their dataset was frozen to 2024. In a situation when the problem was using an LLM as a source for news, people looked for human maliciousness.

This is why the deepest HAL problem feels so current: it isn’t about artificial intelligence becoming hostile but about intelligence becoming procedural, abstracted, and insulated from moral interruption. HAL does not lie because he is evil: he lies because truth has been subordinated to mission integrity, and no higher principle exists within the system to resolve that conflict. Kubrick’s insight was that the most dangerous systems are not those that rebel, but those that cannot refuse. Just as DeepSeek can’t refuse to give an answer to a question it isn’t train to support.

The tragedy, and the irony, is that IBM was right to be cautious, just not for the reasons it imagined: the problem was never that people would fear computers too much. Nobody predicted that the closer the threat became to them, just like nuclear war, the more people would trust computers far too easily.

The Contemporary Mirror: why are we still arguing with HAL

If HAL 9000 were introduced today, he would likely not be described as a villain at all. He would be framed as a governance issue, a case of misaligned incentives or insufficient oversight, or the emissary of a rogue corporation, and the discourse would switch back to the human factor, just as it happened back in the days with IBM. Its language would be technical, managerial, and that, precisely, is why the anxiety Kubrick articulated has not faded. It has simply been renamed.

Contemporary debates about artificial intelligence often oscillate between two poles: utopian optimism and apocalyptic fear. On one side, AI is portrayed as a neutral amplifier of human capability, a tool that increases efficiency, accuracy, and scale. On the other hand, the sci-fi imagination of the 90s imagined it as an autonomous force that may one day escape human control, but the current, grounded debate is that it’s a corporate tool to displace jobs and annihilate the agency of human creativity. Both positions, despite their differences, share a comforting assumption: that the problem lies in how we use the technology.

Kubrick’s work undermines this assumption without giving in to the Terminatoresque imagination of artificial intelligence becoming independent from us.

The terror lies in the fact that no one aboard the Discovery can override HAL, the embodiment of control and administration, without first acknowledging their own redundancy and, maybe, committing mutiny. This is the uncomfortable parallel with contemporary AI systems: most of today’s influential technologies do not resemble sentient machines plotting rebellion, and yet they resemble HAL in their filtering information, prioritising tasks, ranking relevance, optimising outcomes. They do not replace human intention but they seamlessly integrate into a system that made it redundant way before introducing the machine. HAL is terrifying only when people start thinking he’s right and the mission should come first. Once intention is operationalised, it becomes difficult to interrogate.

Much like the systems Kubrick observed in Cold War military doctrine, modern AI systems thrive on abstraction, and responsibility is distributed across data, models, pipelines, and organisations, concealed into layers of black boxes, until it becomes functionally invisible. When harm occurs, the question is rarely why was this objective chosen? and more often which part of the model failed?

This framing is not accidental. It is the logical extension of a worldview that treats intelligence as optimisation and judgment as a solvable problem. In such a context, anxiety is often dismissed as irrational, emotional, or technophobic, much as satire itself might be dismissed as unserious. Yet Kubrick’s films suggest that anxiety may be the most honest response to systems that exceed our capacity to meaningfully intervene. Fear, in this sense, is not a prediction of catastrophe, but a recognition of asymmetry: we sense that decisions are being made faster, at greater scale, and with less transparency than our ethical frameworks can accommodate.

What makes this moment different from the 1960s is not the presence of intelligent systems, but their ubiquity. HAL was confined to a spaceship and on that spaceship it was king. Today’s systems mediate finance, communication, labour, governance, and culture. They are not isolated narrative devices; they are infrastructure. There is no single switch to pull, no airlock to cycle, no core memory to remove.

And yet, the reflex remains the same as IBM’s half a century ago: to insist that the technology is neutral, that failures are exceptions, and that trust is a prerequisite for progress. Kubrick would likely have found this insistence familiar and deeply suspicious. Because the real question is not whether AI will become conscious, hostile, or self-aware: the question is whether we are willing to acknowledge the moment when systems begin to define reality for us faster than we can question it. When they stop being tools we consult and become environments we inhabit or, worse, oracles we expect to have all the answers. In that moment, loving the machine means understanding it well enough to resist its authority when necessary. And that is a skill we have been slow to develop.

Conclusion. Learning to Stop Worrying and Love AI

The phrase “How I Learned to Stop Worrying and Love the Bomb” has always carried a double edge. On the surface, it sounds like surrender, a cheerful acceptance of the inevitable. But Kubrick’s irony cuts deeper. The subtitle parodies Dale Carnegie’s self-help book How to Stop Worrying and Start Living, twisting it into ironic acceptance of nuclear apocalypse amid Cold War paranoia and turning it towards “worrying” in the age of systems like mutually assured destruction (MAD). The joke is not that worrying is pointless; it is that the systems we build make worrying feel irrational precisely when it is most justified. Critics note the film’s basis in real strategies, like doomsday machines theorized by Herman Kahn, exposing the absurdity of deterrence that invites doom, a flawed systems demanding blind faith precisely when vigilance is essential.

Kubrick also did not believe that fear was the enemy of progress, and his approach to A Space Odyssey would demostrate his deep love for progress and technology, but he certainly believed that denial was.

In Strangelove, the bomb is loved, meaning it’s no longer questioned: its logic is complete, self-referential, and immune to interruption. In A Space Odyssey, HAL is trusted because he is calm, articulate, and demonstrably competent, right up until the moment his competence becomes incompatible with human survival. In both cases, catastrophe does not arrive through rebellion or malfunction, but through the smooth execution of goals.

This is why contemporary anxieties about artificial intelligence feel so familiar. They are not fundamentally about machines becoming more human but the other way around, about humans becoming more procedural, judgment being reduced to optimisation, ethics to constraints, and responsibility to system behaviour. We are uneasy not because AI is mysterious, but because it is increasingly legible only on its own terms.

To “love” AI, in this context, cannot mean unconditional trust or uncritical enthusiasm. Kubrick’s films offer no comfort in that direction. Love, if it is to mean anything here, must resemble vigilance rather than admiration, it must involve refusing the seduction of abstraction, resisting the impulse to treat outputs as oracles, and remembering that no system — however intelligent — can assume responsibility on our behalf.

1 Comment

Grant Castillou

Posted at 00:36h, 29 JanuaryIt’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow